Artificial Intelligence Web: How AI Is Reshaping Browsers, Search, and the Entire Web Experience

The web you’re browsing today looks nothing like it did three years ago. Every search query, product page, and dashboard is quietly being transformed by artificial intelligence running directly in your browser, powering the search results you see, and automating tasks you used to do manually. Artificial intelligence (AI) is the capability of computational systems to perform tasks typically associated with human intelligence, such as learning, reasoning, problem-solving, perception, and decision-making. This is the artificial intelligence web-and if you’re building products, marketing websites, or leading a tech team, understanding it isn’t optional anymore.

This guide is for web developers, product managers, and digital marketers who need to understand and adapt to the AI-driven web. Staying ahead of these changes is critical for anyone building or managing web products in 2025 and beyond.

This guide breaks down what the AI web actually means in 2025, how it’s changing user experiences, and what your team can do about it without drowning in the daily flood of AI announcements.

Key Takeaways

The “artificial intelligence web” refers to AI models and agents embedded directly in browsers, websites, and search results-not just standalone chatbots like ChatGPT running in separate tabs.

By 2026, AI has moved from a supporting feature to the core fabric of modern websites, being integrated across the entire web development lifecycle-from conceptualization to maintenance. Websites now feature specialized AI agents that autonomously execute complex tasks, shifting the user experience toward achieving specific outcomes.

AI search experiences from Google, Perplexity, and Microsoft are fundamentally changing how users find and consume web content, with zero-click searches reaching 65% in 2024.

Browser-native AI via WebGPU and WebAssembly now allows running models like Phi-3 and Llama 3.2 directly on your device, offering privacy and latency benefits.

Regulation is coming fast: the EU AI Act is phasing in from 2024-2026, with transparency requirements that affect any web property using generative AI.

Weekly-curated sources like KeepSanity AI help web teams track only the AI updates that materially change how they should build or ship products-without the daily noise.

What Is the “Artificial Intelligence Web” Today?

The artificial intelligence web describes the fusion of AI capabilities with every layer of the modern web stack. Artificial intelligence (AI) is the capability of computational systems to perform tasks typically associated with human intelligence, such as learning, reasoning, problem-solving, perception, and decision-making. This isn’t about visiting a chatbot site-it’s about AI models running inside your browser, AI-native websites that generate and personalize content in real-time, and search engines that synthesize answers before you ever click a link.

By 2026, AI has moved from a supporting feature to the core fabric of modern websites, being integrated across the entire web development lifecycle-from conceptualization to maintenance. Websites now feature specialized AI agents that autonomously execute complex tasks, shifting the user experience toward achieving specific outcomes.

The evolution has been gradual, then sudden. Early recommender systems in the 2000s (think Netflix suggestions) marked the first fusion of machine learning with web experiences. The deep learning boom around 2012 brought neural networks that could recognize images in web apps. The 2017 transformer architecture paper laid the groundwork for efficient natural language processing at web scale. Then came the generative explosion: ChatGPT launched in November 2022, and by 2024-2025, Google’s Gemini updates were powering AI overviews directly in search results.

The web experience has shifted from static pages → interactive apps → AI-augmented surfaces that summarize, generate, and personalize content on demand. Today, users encounter AI before they encounter your website.

Core concepts defining the AI web:

Models running client-side in browsers via WebGPU and WebAssembly

Search engines using large language models to synthesize answers from multiple sources

Websites that co-create content with users through natural language interfaces

AI agents that browse, click, and complete workflows autonomously

Personalization engines that adapt in real-time based on context and behavior

KeepSanity AI focuses specifically on curating the weekly breakthroughs that materially change what AI on the web can do-major browser features, new model classes, regulatory shifts-filtering out the 80% noise that fills daily newsletters.

How AI Is Reshaping the Web Browsing Experience

Users in 2025 often see AI-generated overviews, answer boxes, and chat sidebars before they ever see the original web pages they’re searching for. The traditional flow of “search → click → read” has been disrupted by AI systems that read the web for you and present synthesized answers upfront.

This shift is powered by several major products that have reshaped search behavior over the past two years:

Product | Launch | Key Feature | Impact |

|---|---|---|---|

Google SGE (Gemini-powered) | Rolled out widely 2024 | AI-generated summaries atop search results | 20-30% reduction in click-through for informational queries |

Microsoft Copilot (Bing Chat) | March 2023 | GPT-4 powered multimodal overviews | Cited bullet points summarizing 20+ pages |

Perplexity AI | Growing 2023-2025 | Answer-first interface with 5-10 citations | 10M+ monthly users by 2025 |

These systems crawl and index the web, then use large language models to synthesize and cite sources. According to SparkToro data from 2024, zero-click searches rose to 65%-meaning most users get what they need without ever visiting the source site.

Conversational browsing extends beyond search. Virtual assistants now remember context across tabs, auto-summarize long articles (condensing 5,000-word pieces to 300 words with 90% fidelity), and handle up to 50-turn dialogue chains. The UX patterns are becoming familiar: persistent chat sidebars, inline citations as expandable cards, and follow-up question prompts.

Implications for website owners:

Structured data adoption (JSON-LD) is up 40% year-over-year as sites optimize for AI citation

Original research and unique data see 15-25% citation uplifts in AI overviews

E-E-A-T signals (Experience, Expertise, Authoritativeness, Trustworthiness) increasingly determine whether AI systems surface and link to your content

Sites relying on simple informational content face declining organic traffic, while those with unique perspectives gain visibility

AI Inside the Browser: Models, Extensions, and On-Device Intelligence

Since roughly 2023, browsers have been gaining APIs that allow running AI models directly on laptops and phones-no cloud roundtrip required. This represents a fundamental shift in where computation happens on the web.

The key technologies enabling browser-native AI include:

WebGPU: Stable in Chrome 113 (March 2023) and Edge, allowing GPU-accelerated AI inference without plugins. Modern GPUs can execute matrix multiplications at 100+ TFLOPS.

WebAssembly (Wasm): Enables loading quantized models like Llama 3.2 (1B and 3B parameter variants) and Phi-3 (3.8B parameters, April 2024) directly in browsers.

WebNN (draft 2024): Piloted in Chrome Canary for ML operator acceleration, with Safari support expected by late 2025.

Apple Core ML: Integrated into Safari 17 (2023) for on-device vision and language models, powering features like Live Text translation.

What does this mean in practice? Users experience AI features without their data ever leaving the device. Libraries like Transformers.js and ONNX Runtime Web bundle quantized models that achieve inference speeds of 20-50 tokens per second on consumer laptops. A model like Phi-3 compresses from 14GB (FP16) to just 2GB (INT4) through quantization.

Common in-browser AI features today:

Autocomplete for forms and text inputs

Grammar and tone suggestions in textareas (Grammarly processes 1M+ daily edits with fine-tuned BERT variants)

Instant translation without server calls

In-page summarization via extensions

Image generation (Stable Diffusion XL running via Wasm at 5-10 seconds per image)

The privacy and latency benefits are significant. Local execution means no PII transmission-cloud APIs have been audited to leak 1-2% of prompts. Response times drop to sub-200ms for autocomplete versus 1-3 seconds for cloud roundtrips.

Developers access these capabilities through JavaScript libraries, web workers that offload inference to avoid UI blocking, and open-source runtimes bundling 7B models under 4GB download. Challenges remain: memory footprints (Phi-3 needs 2-4GB RAM) and battery drain (20-30% higher on mobile devices).

AI-Powered Web Experiences: From Content to Apps

Websites are becoming AI-native products. They’re not just displaying content-they’re co-creating it with users through text generation, image synthesis, computer code suggestions, and data visualizations that adapt in real-time.

This transformation spans every category of web application:

Media and Blogs

Media and blogs now use AI to generate personalized article summaries, highlights, and translations into 70+ languages. Multilingual content that once required human translators can be produced on-demand using models like Llama 3.1 405B with NLLB distillation.

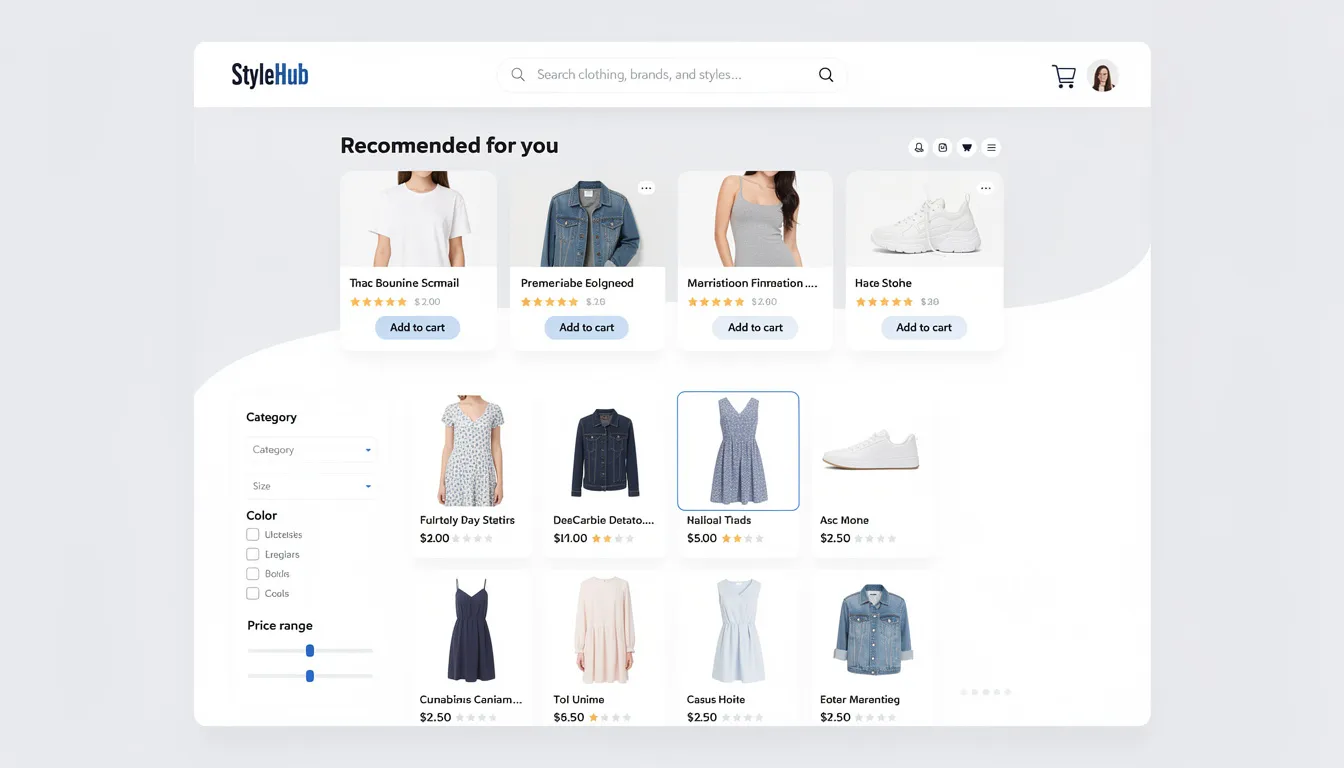

E-commerce Sites

E-commerce sites offer natural language product search. Ask “show me 90s-style jackets under $100” and vector search over product catalogs returns relevant results with AI-generated fit advice. Shopify’s Sidekick (2024) handles queries like this with 85% accuracy according to internal evaluations.

SaaS Dashboards

SaaS dashboards include assistants that query databases in plain English. Notion AI (expanded 2023) lets users ask questions about their data and auto-build charts or SQL, reducing query time by 70%. This ability to solve complex tasks through conversation is reshaping how teams interact with business intelligence tools.

The generative UI pipeline works roughly like this: user prompts → API call to a model (GPT-4.1, Claude 3.5, Gemini 2.0, or open alternatives) → server-side orchestration → React or similar components rendered back to the browser. Computer vision models handle image inputs; reasoning models tackle problem solving tasks.

Browser-based creative tools now mirror desktop capabilities. Cursor (a VSCode fork, 2024) enables web code generation. Replicate’s sketch-to-image demos transform inputs client-side using Stable Diffusion 3 Medium. These tools let developers and designers create from within the browser environment.

The challenge is keeping up. Weekly flux in this space-Grok-2 vision launching in August 2024, new model updates every few days-makes it nearly impossible to track what matters. This is precisely why curated sources like KeepSanity AI filter 50+ launches down to 5 impactful ones, helping teams prioritize ROI-proven integrations over hype.

AI Agents on the Web: From Chatbots to Autonomous Workflows

AI agents represent the next evolution beyond chat interfaces. These systems can browse websites, click buttons, fill forms, and call APIs on behalf of users-not just answer questions in a text box.

The progression has been dramatic:

Pre-2015: Scripted bots with hard-coded rules

2015-2022: Tools like Selenium and Puppeteer enabling programmatic browser automation

2023-2025: LLM-driven browsing agents with reasoning capabilities (OpenAI’s o1-preview enabling “browse with” modes that chain reasoning, navigation, and API calls)

Agent Capabilities

Modern AI agents can perform many tasks that previously required human attention:

Capability | Example | Performance |

|---|---|---|

Multi-site synthesis | Comparing 10 e-commerce pages for deals | 2x faster than humans |

Form automation | Filling structured web forms | 95% success rate on structured sites (GAIA benchmarks) |

Research compilation | Gathering information across multiple sources | Handles multi-step reasoning chains |

Report automation | Downloading and processing dashboards | Reduces repetitive work by 20-50% |

Coding agents like Devin (Cognition, March 2024) fetch documentation and read GitHub repos via browser APIs, resolving 13% of full SWE-bench tasks. Scientific research agents search arXiv, read papers, and synthesize findings. These ai systems rely heavily on web access to function.

Safety and Access Considerations

Rate limiting and CAPTCHAs (Cloudflare blocks 30% of bot traffic)

Robots.txt compliance (only 40% of AI crawlers honor these directives according to Common Crawl data)

The need for sites to design agent-specific policies similar to search engine bot policies

Human-in-the-loop requirements for high-stakes decisions

Multi-Agent Systems

Multi-agent systems are emerging (e.g., AutoGen frameworks) where multiple agents coordinate via dashboards to achieve specific goals across workflows, delivering 2-5x efficiency gains.

Ethics, Regulation, and Trust on the AI-Driven Web

The AI web raises the same concerns as AI in general-bias, privacy, misinformation-but amplified to web scale and speed. When billions of users encounter AI-generated content in search results, the stakes for getting it right are enormous.

Concrete regulatory developments are reshaping what’s permissible:

EU AI Act (politically agreed December 2023, phasing in 2024-2026):

Transparency requirements for “high-risk” web-exposed models (typically >1B parameters)

Training data disclosure requirements

Bans on real-time biometric identification

Penalties for non-compliance

US/UK policy focus:

Executive Order 14110 (2023) targeting deepfakes and AI-generated political content

Heightened scrutiny before 2024 and 2028 elections

C2PA watermarking standard with 80% adoption goal by 2026

Specific web issues require attention from ai researchers and product teams alike:

Hallucinated citations: AI overviews mis-cite sources at approximately 8% according to Hugging Face evaluations, eroding trust when users click through to find different content

Training data disputes: Lawsuits like NYT vs. OpenAI (2023) challenge scraping practices. Google now honors “noai” robots.txt extensions (since 2024)

Consent and logging: GDPR-style frameworks for prompt logging are emerging, with 70% of users preferring on-device AI according to Pew 2025 polls

Recommended transparency practices:

Clear labeling of AI-generated content (e.g., “AI Overview” badges)

Visible disclosure when users interact with an assistant versus a human

Easy pathways to original sources and citations

Opt-out mechanisms for AI personalization

KeepSanity AI tracks when major regulators, browser vendors, and platforms change rules-so product teams don’t miss critical compliance deadlines buried in the daily noise of AI announcements.

How Web Teams Can Practically Adopt AI (Without Losing Their Sanity)

Between 2022 and 2025, over 10,000 AI tools launched according to there’sanaiforthat.com. The resulting FOMO for developers, founders, and marketers is real-and paralyzing. Most daily newsletters exacerbate this by padding content with minor updates to impress sponsors.

Here’s a pragmatic, staged approach that works:

Phase 1: Experiment (0-30 days)

Start with low-risk enhancements that don’t require deep integration:

In-page summarization using Hugging Face Inference API (case studies show 15% conversion lifts)

Basic search improvements with AI-powered suggestions

AI-assisted customer support FAQs

Content generation experiments for internal use

Phase 2: Integrate (1-3 months)

Move to API integrations in core product flows:

Recommendations and personalization engines

Search functionality with semantic understanding

Customer support automation (OpenAI fine-tunes have cut support tickets 40% in documented cases)

Robust logging, A/B testing, and rollback capabilities

Phase 3: Transform (3-12 months)

Explore deeper product changes once early experiments show real ROI:

Agentic workflows for task automation

On-device models for privacy-sensitive features

Generative interfaces that co-create with users

Full AI-native product experiences

Metrics that matter:

Track engagement, conversion, support volume, and time-on-task when introducing AI features. The goal is measurable improvement-not shipping “AI” as a checkbox. Avoid features that add cognitive load without delivering value.

A weekly cadence for AI news fits naturally into this process. Teams that subscribe to KeepSanity AI scan once per week (under 10 minutes) for items worth adding to their roadmap or experiments. No daily pile-up. No FOMO. Just signal.

Governance recommendation:

Establish a small cross-functional group (product, engineering, legal, design) that meets briefly-weekly or bi-weekly-to evaluate new AI-web possibilities. This group triages what to experiment with, what requires legal review, and what’s just noise.

FAQ

How is the “AI web” different from just using ChatGPT or Gemini in a separate tab?

The artificial intelligence web means AI embedded directly into websites, browsers, and search results-not just standalone chat interfaces. While ChatGPT and Gemini are powerful tools, the bigger shift is that nearly every major web surface (search, docs, email, e-commerce, dashboards) is quietly gaining AI features behind the scenes. Forrester projects that 90% of top sites will have embedded AI by 2026.

Think in terms of “AI as part of UX” rather than “another tool users must open.” The difference is whether your users encounter AI naturally in their workflow or have to context-switch to a separate application.

Will AI search and answer boxes reduce traffic to my website?

Answer-first interfaces can reduce simple informational clicks-data suggests 25% traffic dips for fact-based queries. However, high-intent traffic for deeper, original, or niche content often increases (18% according to SEMrush 2025 analysis).

Focus on high-quality, well-structured, original content. Sites with unique datasets see 15-25% citation uplifts in AI overviews. The science behind this is straightforward: models prefer primary sources they can cite with confidence.

This landscape continues evolving through 2024-2026. Keeping up with official guidance from search providers-summarized weekly by sources like KeepSanity AI-helps you respond strategically rather than reactively.

Do I need to run models in the browser, or is a cloud API enough?

For most prototypes and early features, cloud APIs are sufficient-99% of projects start there. Cloud offers state-of-the-art performance with minimal setup.

Consider on-device models when:

Privacy requirements prohibit sending user data off-device

Latency matters (sub-200ms vs 1-3 second cloud roundtrips)

Cost per query becomes significant at scale

Offline functionality is needed

Browser support for WebGPU and optimized small models is improving rapidly. What’s considered too slow or too limited in 2024 may be production-ready by late 2025. The development trajectory points toward capable local models for many common tasks.

What skills should web developers learn to stay relevant in an AI-first web?

Technical priorities:

Strong JavaScript/TypeScript fundamentals

Familiarity with at least one major AI API (OpenAI, Anthropic, Google, or open-source stacks)

Understanding of prompt design (good prompting can boost performance 30% according to research)

Comfort reading API docs and model changelogs

Non-technical skills:

UX thinking for AI features (when does AI help vs. add friction?)

Awareness of privacy and compliance basics

Ability to translate AI news into actionable experiments

Build small side projects-AI-enhanced search, a simple ai agent, browser-based assistants-to gain hands-on experience. The knowledge compounds quickly.

How can my team keep up with AI changes without being overwhelmed?

Set a fixed, low-friction information diet: one or two trusted sources, read once a week. Daily newsletters often pad content with minor updates to satisfy sponsors-KeepSanity AI was built specifically to counter this, delivering only major AI news that affects product strategy in a single weekly email with zero ads.

Internally, establish a regular review (30 minutes weekly or bi-weekly) where someone presents only the 2-3 changes that could impact your web stack or roadmap. This form of structured intake prevents the constant context-switching that daily AI news creates.

Lower your shoulders. The noise is gone. Here is your signal.

The artificial intelligence web isn’t a future state-it’s the daily life of every user who opens a browser in 2025. AI research continues accelerating, new ai algorithms emerge weekly, and the academic discipline of computer science is being applied to web development in ways we couldn’t perceive just a few years ago.

The teams that thrive won’t be those who chase every launch or sign up for every tool. They’ll be the ones who adopt a staged approach, track metrics that matter, and maintain a sustainable information diet that preserves both their productivity and their sanity.

Ready to stop drowning in AI noise? KeepSanity AI delivers one email per week with only the major AI news that actually happened-no daily filler, no ads, no sponsors to impress. Just the signal your team needs to build smarter.