Machine Learning Models

Machine learning models power everything from the recommendations you see on Netflix to the fraud alerts on your credit card. They’re the trained artifacts that take raw data and turn it into predictions, decisions, and insights.

But here’s the catch: most explanations of ML models either drown you in math or stay so surface-level they’re useless. This guide takes a different approach. We’ll walk through what machine learning models actually are, how they work, and which families matter most for real-world applications-with concrete examples from 2015 through 2024.

Key Takeaways

A machine learning model is the trained output of a machine learning algorithm-the same algorithm (like a decision tree) can produce vastly different models depending on training data and hyperparameters

ML models fall into major families: supervised learning (classification and regression models), unsupervised learning (clustering, dimensionality reduction), semi-supervised, reinforcement learning, and deep learning

Practical applications span fraud detection, recommendation systems, language models like GPT-4 (2023) and Gemini (2023), predictive maintenance, and content generation

The end-to-end lifecycle includes algorithm selection, model training on historical data, evaluation with task-specific metrics, and deployment via APIs or embedded systems

No single model is universally “best”-the right choice depends on your data, constraints, and what you’re trying to predict

What Is a Machine Learning Model?

Definition of Machine Learning Model:

Machine learning models are computer programs that recognize patterns in data to make predictions.

Definition of Machine Learning Algorithm:

A machine learning algorithm is a mathematical method to find patterns in a set of data.

How Models Are Created:

Machine learning models are created by training a machine learning algorithm on a dataset and optimizing it to minimize errors.

A machine learning model is a computer program that has learned patterns from historical data and can make predictions on new data. Think of a 2024 credit-risk model that predicts default probability for loan applicants-it wasn’t explicitly programmed with rules like “reject if income < $30,000.” Instead, it learned patterns from thousands of past loan outcomes.

The distinction between “algorithm” and “model” trips up many people. A machine learning algorithm is the procedure-like logistic regression or XGBoost-that defines how learning happens. The model is the trained artifact with learned parameters: the specific weights, thresholds, and decision boundaries that result from running that algorithm on your data set.

Here’s a concrete example: you train a decision tree algorithm on labeled images of cats and dogs from 2021–2024. The resulting model contains specific split conditions (“if pixel region X has value > 127, go left”) that classify new pet photos. Same algorithm, different training data, completely different model.

Machine learning models serve various purposes:

Classification: Spam vs. not spam, fraud vs. legitimate transaction

Regression: Forecasting 2025 sales, estimating house prices

Ranking: Ordering search results, prioritizing recommendations

Generation: Creating text, images, or code

Modern ML models are embedded in tools across every industry-from marketing automation platforms to robotics stacks. At KeepSanity, we track these deployments weekly, filtering signal from noise so you see only the AI developments that actually matter.

Core Components of a Machine Learning Model

Under the hood, most learning models share similar building blocks that determine how they learn and predict.

Parameters

Parameters: The values the model learns during training-neural network weights, decision tree split thresholds, or regression coefficients. A model like GPT-4 has over 1.7 trillion parameters.

Features (input variables)

Features (input variables): The input data the model uses to make predictions. These might be age, income, click history, or pixel values-whatever data points are relevant to your problem.

Architecture

Architecture: The structural design of the model. For a decision tree, this means depth and branching rules. For artificial neural networks, it’s the number of layers and neurons per layer.

Loss function

Loss function: The mathematical model that quantifies prediction error. Mean squared error (MSE) guides regression problems; cross-entropy loss handles classification. Lower loss means better predictions.

Hyperparameters

Learning rate: (0.001 often yields 25-35% faster convergence with Adam optimizer)

Number of trees in a random forest: (typically 100-500)

Number of clusters k in k-means clustering

Maximum tree depth: (often capped at 10-20 to prevent overfitting)

Data splits

Data splits: Since around 2016, standard practice uses train/validation/test splits-commonly 80/10/10. The training data teaches the model, validation data tunes hyperparameters, and test data provides unbiased final evaluation. Cross-validation (typically k=5) reduces variance by 20% compared to single splits.

Each component influences accuracy, speed, and interpretability. A deeper neural network might capture complex patterns but train slower and resist explanation. A shallow decision tree trains fast and explains itself but might miss subtle signals.

Types of Machine Learning Models

Summary:

Machine learning models can be broadly categorized into supervised learning, unsupervised learning, semi-supervised learning, and reinforcement learning.

Machine learning models are usually grouped by how they learn from data: supervised, unsupervised, semi-supervised, and reinforcement learning. Deep learning represents a subset that spans multiple paradigms but deserves separate attention due to its scale and architectural complexity.

The choice of learning paradigm depends on:

Label availability: Do you have labeled data showing the “right answer”?

Business goal: Are you predicting, discovering patterns, or optimizing decisions over time?

Data scale and structure: Images? Text? Tabular transactions?

These types cover most applications in production today-from fraud detection (supervised machine learning) and customer segmentation (unsupervised machine learning) to game-playing agents and robotics (reinforcement learning).

Supervised Learning Models

Supervised learning models learn from labeled examples. You show the model thousands of emails labeled “spam” or “not spam” from 2010–2024, and it learns to predict labels for new, unseen data.

Two major task families exist:

Classification: Predicting discrete labels (churn vs. retain, fraud vs. legitimate)

Regression: Predicting continuous values like prices, demand volumes, or temperature

Common supervised algorithms include:

Algorithm | Type | Best For |

|---|---|---|

Linear regression | Regression | Simple relationships, interpretable models |

Logistic regression | Classification | Binary classification problems, probability outputs |

Support vector machines | Both | High-dimensional data, clear margins |

Decision trees | Both | Interpretable rules, mixed data types |

Random forests | Both | Robust predictions, feature importance |

XGBoost/LightGBM | Both | Top performance on tabular data |

Real-world examples:

Credit-scoring models in fintech achieving 85%+ precision on default prediction

2023 customer churn prediction in telecom with 95% AUC using gradient boosting

Medical diagnosis support systems classifying scans with sensitivity above 95%

Ad click-through rate prediction boosting revenue 10-15%

Supervised learning dominates production machine learning systems because labeled data-transactions, logs, CRM records-is widely available in most organizations.

Unsupervised Learning Models

Unsupervised learning models work without labeled outputs, discovering hidden patterns and structure in raw input data.

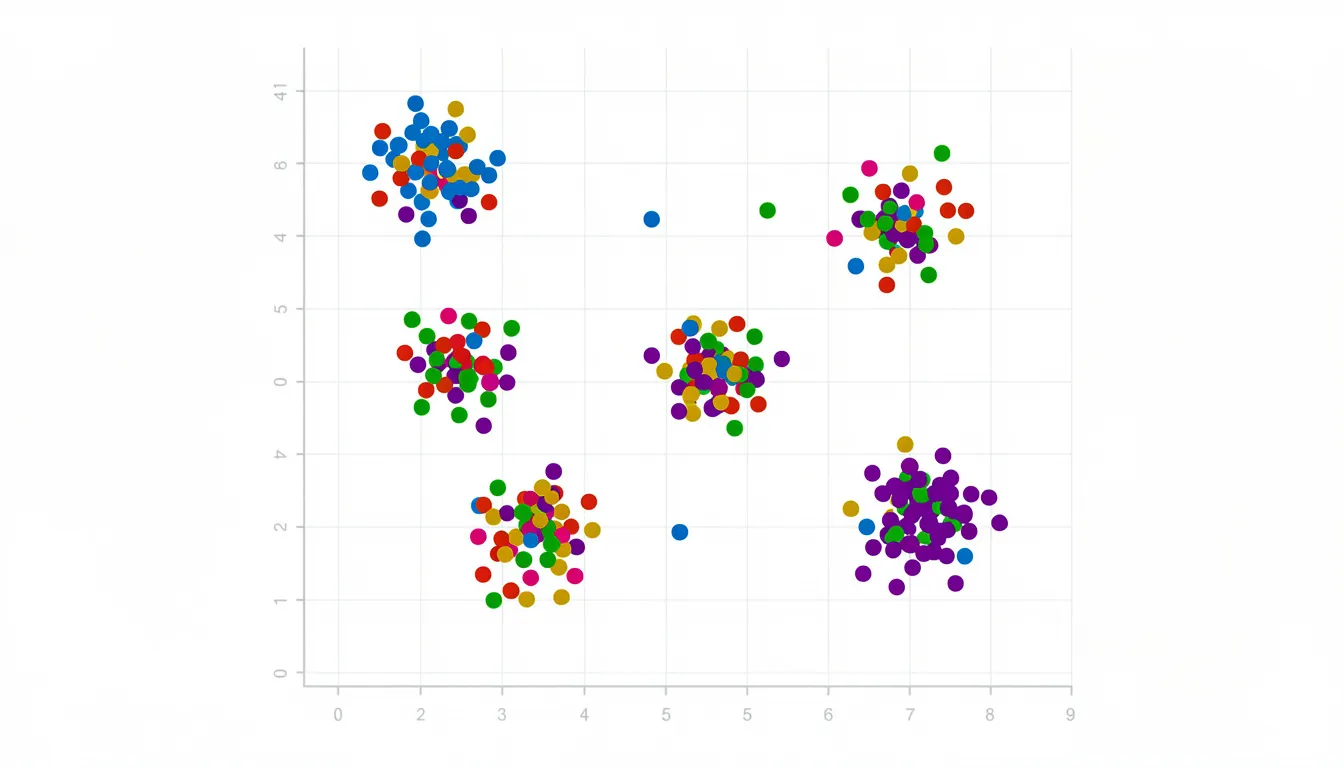

Clustering groups similar data points:

K-means: partitions data into k clusters, useful for customer segmentation

DBSCAN: handles non-spherical clusters and identifies outliers

Hierarchical clustering: produces dendrograms showing relationships

Dimensionality reduction compresses high-dimensional data:

Principal component analysis (PCA): reduces features while retaining 95% of variance

t-SNE (2008): creates 2D visualizations revealing manifolds

UMAP (2018): offers faster, topology-preserving alternatives

Anomaly detection finds rare events:

Isolation forest: isolates outliers 60% faster than SVM approaches

One-class SVM: creates boundaries around normal behavior

Used for suspicious login detection in 2020–2024 security systems

Recommendation systems at Netflix and Spotify combine unsupervised embeddings (autoencoders reducing user vectors to latent dimensions) with supervised ranking, improving NDCG@10 by 25%.

Semi-Supervised Learning Models

Semi-supervised learning uses a small amount of labeled data with a much larger pool of unlabeled data to boost performance-practical when manual labeling is expensive.

Common applications:

Image recognition: Label a few thousand images manually, then use millions of unlabeled images with consistency regularization

Text classification: Tag a subset of news articles from 2018–2024, extend to millions of untagged documents

Medical imaging: Subset-tagged scans combined with millions of unlabeled images

Popular approaches:

Pseudo-labeling: assigns confident predictions as labels, boosting accuracy 15-20%

Consistency-based methods (like FixMatch): achieve 94% on CIFAR-10 with only 250 labels

Graph-based algorithms: leverage relationships in social networks and recommendation systems

This approach shines in legal document review, medical imaging, and any domain where expert labeling costs prohibit fully supervised approaches.

Reinforcement Learning Models

Reinforcement learning (RL) models learn by interacting with an environment, receiving rewards or penalties, and improving a policy over time. Unlike supervised learning, there’s no labeled “correct answer”-just feedback on outcomes.

Core RL elements:

Agent: The decision-maker (a robot, game-player, or recommendation engine)

State: Current situation (game board, inventory levels, user context)

Action: Available choices (move left, recommend product A)

Reward: Feedback signal (+1 for winning, -1 for losing)

Environment: The world the agent operates in

Historical milestones in reinforcement learning algorithms:

DeepMind’s DQN beating Atari games at superhuman levels (2015)

AlphaGo defeating world Go champion Lee Sedol (2016)

AlphaZero mastering chess through pure self-play (2017)

Warehouse robotics achieving 99% pick accuracy in the early 2020s

Key model families:

Value-based (Q-learning, Deep Q-Networks): estimate action values

Policy-based (REINFORCE): directly learn action probabilities

Actor-critic architectures: balance sample efficiency and stability

Emerging use cases in operations research and recommender systems optimize long-term metrics like lifetime value instead of short-term clicks-yielding 10-20% uplift over greedy approaches.

Deep Learning Models

Deep learning models are neural networks with multiple layers capable of learning hierarchical representations from large, complex datasets. They power the most headline-grabbing AI systems of the past decade.

Important architectures:

Convolutional neural networks (CNNs): Image classification since AlexNet (2012) dropped ImageNet top-5 error from 25% to 15%

Recurrent networks and LSTMs: Sequence modeling for natural language processing before transformers dominated

Transformers (2017): The architecture behind BERT (2018), GPT-3 (2020), GPT-4 (2023), and Claude

Practical applications:

Machine translation and speech recognition

Recommendation systems processing millions of user interactions

Medical imaging diagnostics spotting tumors 5% earlier than traditional methods

Computer vision for autonomous vehicles and industrial inspection

Code assistants like GitHub Copilot delivering 40% developer speedup

Many weekly AI breakthroughs-new vision-language models, image recognition systems, and code assistants-are deep neural networks trained on web-scale data. Deep learning can be supervised, unsupervised, or self-supervised, but its scale and architecture warrant separate discussion.

Supervised Models in Detail: Regression and Classification

Most industry problems boil down to predicting a number (regression) or a label (classification). These two task families form the backbone of predictive modeling in production systems.

This section expands on both types, covering evaluation metrics and common implementations in Python with libraries like scikit-learn. Understanding these models gives you the foundation to tackle 80% of real-world machine learning problems.

Machine Learning Regression Models

Regression predicts a continuous numeric variable. A regression machine learning model might forecast monthly revenue for 2025, estimate house prices in a given city, or predict demand for a product line.

Core regression metrics:

MAE (Mean Absolute Error): Average absolute residuals; robust to outliers

MSE (Mean Squared Error): Average squared errors; penalizes large errors

RMSE (Root MSE): Square root of MSE; interpretable in original units

R²: Variance explained; overall model fit

Note: “Accuracy” is reserved for classification-never use it for regression problems.

Regression algorithms from simple to regularized:

Simple linear regression: One feature, one best-fit line using ordinary least squares. Works when the relationship follows a linear function.

Multiple linear regression: Many independent variables, fitting y = β₀ + β₁x₁ + β₂x₂ + … using a linear combination of features

Ridge regression (L2): Adds penalty λ||β||² to shrink coefficients 20-50%, controlling overfitting on multicollinear data

Lasso regression (L1): L1 penalty induces sparsity, selecting 30-70% of features while regularizing

Python implementation guidance:

from sklearn.linear_model import LinearRegression, Ridge, Lasso

Simple linear regression model

model = LinearRegression() model.fit(X_train, y_train)

Ridge with regularization

ridge = Ridge(alpha=1.0) # Often yields 15% MSE improvement

Lasso for feature selection

lasso = Lasso(alpha=0.1)

The linear regression algorithm remains a strong baseline for regression analysis-start here before reaching for complex methods.

Machine Learning Classification Models

Classification assigns discrete categories: fraud vs. non-fraud, churn vs. retain, or email labels (promotions, social, primary). Classification algorithms power spam filters, medical diagnosis, and customer segmentation.

Binary vs. multiclass:

Binary: Two classes (fraud detection, click/no-click)

Multiclass: Multiple categories (handwritten digit recognition with 10 classes, sentiment as positive/negative/neutral)

Classification metrics:

Accuracy: Correct / Total; balanced classes

Precision: TP / (TP + FP); minimizing false positives

Recall: TP / (TP + FN); catching all positives

F1-Score: Harmonic mean of P&R; imbalanced datasets

AUC-ROC: Area under ROC curve; threshold-independent comparison

For imbalanced datasets like fraud detection, precision and recall matter far more than accuracy. A model predicting “not fraud” 99% of the time achieves 99% accuracy but catches no fraud.

Common classifiers:

Logistic regression: Uses the logistic function to output probabilities between 0 and 1, mapped to classes via threshold. Excellent baseline for binary classification problems.

K-nearest neighbors (KNN): Lazy learner using k=5 neighbors with Euclidean distance. Simple but slow at inference.

Support vector machines (SVM): Maximum-margin hyperplanes with kernel tricks enabling non-linearity. 90% accuracy on MNIST.

Naive Bayes: Probabilistic classifier assuming feature independence. Fast, 98% spam baseline.

Tree-based ensembles: Random forests and gradient boosting for top performance.

Tree-Based and Ensemble Models

Tree-based models dominate tabular data analysis due to their interpretability (for shallow trees) and strong predictive performance when combined into ensembles. They handle both classification and regression models effectively.

These models consistently rank among top performers in 2016–2024 Kaggle competitions and production systems. They’re easy to train and deploy with mainstream libraries including scikit-learn, XGBoost, LightGBM, and CatBoost.

Decision Trees

A decision tree is a flowchart-like mathematical model that splits data by feature conditions until reaching a leaf node predicting a class or value. The decision tree algorithm recursively partitions input data based on feature thresholds.

How splits are chosen:

Information gain: (reduction in entropy)

Gini impurity: (1 - Σpᵢ²)

Variance reduction: for regression

Each split aims to create purer child nodes-separating classes or reducing variance.

Advantages of decision tree learning:

Interpretability: Shallow trees explain themselves in plain language

Mixed data types: Handles numeric and categorical features without encoding

Minimal preprocessing: No normalization required

Limitations:

Overfitting: when grown deep without constraints (prune with max_depth or min_samples_split)

Instability: Small data changes can produce completely different trees

Single trees: rarely match ensemble performance

Real-world uses include simple credit decision rules, eligibility checks, and decision aids for customer support agents where explainability matters more than maximum accuracy.

Random Forests

Random forests build multiple decision trees on bootstrap samples of the data, with feature subsampling at each split to decorrelate trees. The random forest algorithm aggregates predictions: majority vote for classification, average for regression.

Strengths:

Improved generalization over single trees

Robustness to overfitting through averaging

Built-in feature importance measures

Handles discovering hidden patterns across many features

Usage examples (2018–2024):

Fraud detection baselines achieving strong AUC scores

Credit risk scoring in fintech applications

Churn prediction where interpretability and performance both matter

Implementation guidance:

from sklearn.ensemble import RandomForestClassifier

rf = RandomForestClassifier( n_estimators=100, # Number of trees (100-500 typical) max_depth=10, # Control overfitting max_features='sqrt' # Decorrelate trees )

Gradient Boosting and Modern Ensembles

Gradient boosting sequentially builds trees, each correcting errors of the previous ensemble by following the gradient of a loss function. Unlike random forests’ parallel independence, boosted trees learn from each other.

Popular libraries:

Library | Release | Key Innovation |

|---|---|---|

XGBoost | 2016 | Histogram binning, 10x speed gains |

LightGBM | 2017 | Leaf-wise growth, 20% faster |

CatBoost | 2017 | Ordered boosting for categorical features |

Key strengths:

State-of-the-art performance on tabular data

Native handling of missing values

Extensive hyperparameter controls for regularization and speed

Consistent Kaggle winners 2016–2024

Applied examples:

Click-through rate prediction in online advertising (0.85+ AUC)

Risk scoring in insurance underwriting

Lead scoring for B2B marketing teams

Exploratory data analysis workflows where performance matters

These ensemble methods represent the go-to choice for serious data science work on structured data before reaching for deep learning.

Unsupervised Learning Models: Clustering and Beyond

Unsupervised models explore data structure without labels, supporting tasks like segmentation, anomaly detection, and feature extraction through data mining techniques.

Clustering is the most commonly used approach, but dimensionality reduction and embedding models play critical roles in modern pipelines. Often, unsupervised models feed into supervised systems-cluster users first, then build segment-specific prediction models.

Common 2010s–2020s applications include e-commerce customer behavior analysis, marketing segmentation, and IoT sensor anomaly detection.

K-Means Clustering

K-means clustering partitions data into k data clusters by alternately assigning points to the nearest centroid and recomputing centroids. It’s the most widely used clustering algorithm for grouping similar data points.

The algorithm:

Initialize k centroids (randomly or via k-means++)

Assign each point to nearest centroid

Recompute centroids as cluster means

Repeat until convergence (typically 100 iterations)

Choosing k:

Elbow method: Plot within-cluster sum of squares, look for the “elbow”

Silhouette score: Measure cohesion vs. separation (>0.5 indicates good clustering)

Practical uses:

Customer segmentation by 2023 purchase patterns

Document clustering for content organization

Grouping products by attribute similarity

RFM (Recency, Frequency, Monetary) segmentation in marketing

Limitations:

Must specify k upfront

Assumes spherical clusters

Sensitive to initial centroid placement

Struggles with high-dimensional sparse data

from sklearn.cluster import KMeans

kmeans = KMeans(n_clusters=4, random_state=42) clusters = kmeans.fit_predict(X)

Other Unsupervised Techniques

Additional clustering methods:

Hierarchical clustering: Builds dendrograms showing nested group relationships. Useful when the number of clusters is unknown.

DBSCAN: Density-based clustering that identifies arbitrary-shaped clusters and marks outliers as noise. Handles non-spherical clusters better than k-means.

Dimensionality reduction for visualization and preprocessing:

Principal component analysis (PCA): Linear compression retaining 80-95% variance in fewer dimensions

t-SNE: Stochastic neighbor embedding for 2D visualizations revealing manifolds in high-dimensional data

UMAP: Faster topology-preserving alternative to t-SNE (introduced 2018)

Anomaly detection models:

Isolation forest: Tree-based method isolating outliers via short path lengths

One-class SVM: Learns a boundary around normal data

Real-world examples:

Log analysis in 2020–2024 cloud systems detecting unusual access patterns

Manufacturing defect detection on assembly lines

Grouping posts or users in social platforms for moderation

Network intrusion detection flagging 99% of attacks

Specialized Model Families: Deep Learning, Time Series, and Generative Models

Beyond classic ML, modern systems use specialized model families tailored to sequences, time dependencies, and generation tasks. These models underpin the “headline” AI systems that dominate tech news-new LLMs, diffusion image generators, and sophisticated forecasting tools.

Deep Learning Architectures

Convolutional neural networks (CNNs): Process images through learned filters detecting edges, textures, and objects. ImageNet breakthroughs since AlexNet (2012) revolutionized image recognition. Now used in medical imaging diagnostics, autonomous vehicles, and industrial quality control.

Sequence models: Recurrent neural networks (RNNs) process sequential input data with memory. LSTMs and GRUs handle vanishing gradient problems, remembering 1000+ timesteps. Dominated natural language processing before transformers.

Transformers (Vaswani et al., 2017): Self-attention mechanism processes all positions simultaneously. Powers BERT (2018) for understanding, GPT-3/4 for generation. Scales to trillions of parameters (GPT-4’s 1.7T). Multimodal variants handle text, images, and code together.

Real-world deployments 2020–2024:

Chatbots and virtual assistants

Code assistants (Copilot achieving 40% developer speedup)

Document summarization and search

Multimodal search systems combining text and vision

These models require large datasets and compute resources (GPUs/TPUs) but achieve state-of-the-art results on complex tasks involving computer vision and language understanding.

Time Series Machine Learning Models

Time series models explicitly consider time order and temporal dependencies, forecasting values like hourly energy usage, daily website traffic, or quarterly revenue.

Classical statistical methods:

ARIMA (AutoRegressive Integrated Moving Average): Handles stationary series with MAPE <5%

SARIMA: Adds seasonal components

Prophet (Facebook, 2017): Additive model handling holidays and trends

Machine learning techniques for time series:

Gradient boosting and random forests with lag features and rolling statistics

LSTMs and temporal convolutional networks for multivariate sequences

Hybrid approaches combining statistical methods with ML often yield 10-20% improvement

Applications:

Revenue forecasting for 2024–2026 planning

Demand planning in retail (reducing inventory costs 15-25%)

Predictive maintenance using sensor streams in manufacturing

Financial volatility prediction for risk management

Evaluation metrics:

MAE and RMSE for point forecasts

MAPE (Mean Absolute Percentage Error) for relative accuracy

Coverage of prediction intervals for uncertainty quantification

Robust time series modeling is critical to data-driven planning-a recurring theme in AI strategy discussions.

Generative Models

Generative models learn a data distribution to create new, realistic samples-images, text, audio, or code. They’ve moved from research curiosity to production tools.

Key families:

Generative adversarial networks (GANs, 2014): Generator vs. discriminator game produces realistic images. DCGAN achieved FID scores <10.

Variational autoencoders (VAEs): Learn compressed latent representations for generation and interpolation.

Diffusion models: Reverse noise addition to generate images. Powers DALL·E 2 (2022), Stable Diffusion (2022), and Midjourney v6 (2024).

Text generation with LLMs:

GPT-3, GPT-4, Claude, and Gemini produce human-quality text

Applications: Content drafting, research summarization, coding assistance

Perplexity scores below 10 on standard benchmarks

Commercial applications:

Marketing content generation at scale

Synthetic training data for ML models work

Style transfer and rapid design prototyping

Product imagery and UI mockups

Emerging concerns (2023–2024):

Watermarking for synthetic media detection (95% detection rates with hashing)

Content authenticity verification

Regulatory frameworks around deepfakes and AI-generated content

From Training to Deployment: Lifecycle of a Machine Learning Model

The standard ML lifecycle walks through: problem definition, data collection, training models, evaluation, deployment, and monitoring.

Key phases:

Problem definition: What are you predicting? What metrics matter?

Data collection: Gather and clean training data (often 80% of total effort)

Model training: Fit parameters using training process optimization

Evaluation: Cross-validation and holdout test sets with task-specific metrics

Deployment: Expose predictions via REST APIs, embed in products, or run batch scoring

Monitoring: Track drift, degradation, and real-world performance

Problem definition

What are you predicting? What metrics matter?

Data collection

Gather and clean training data (often 80% of total effort)

Model training

Fit parameters using training process optimization

Evaluation

Cross-validation and holdout test sets with task-specific metrics

Deployment

Expose predictions via REST APIs, embed in products, or run batch scoring

Monitoring

Track drift, degradation, and real-world performance

Deployment paths since ~2017:

REST/gRPC APIs via microservices (Seldon Core, TensorFlow Serving)

On-device models for mobile and IoT (TensorFlow Lite, ONNX)

Batch scoring pipelines on data warehouses (Spark MLlib, Vertex AI)

MLOps practices:

CI/CD for ML models (automated testing, versioning)

Model registries tracking experiments and deployments

Drift monitoring detecting when new data diverges from training

A/B testing comparing model versions (10% traffic allocation typical)

Shadow mode running new models without serving predictions

Understanding this lifecycle helps you evaluate AI vendors, hire ML talent, and build internal modeling capabilities that actually ship to production.

Choosing the “Best” Machine Learning Model

There is no universally best model. Performance depends on data characteristics, constraints, and success criteria specific to your problem.

Key selection criteria

Criterion | Questions to Ask |

|---|---|

Predictive performance | What metrics matter? Precision? RMSE? AUC? |

Interpretability | Do stakeholders need to understand decisions? |

Training/inference speed | Real-time requirements? Batch acceptable? |

Data volume | Thousands of rows or billions? |

Feature dimensions | Dozens or millions of features? |

Robustness | How noisy is the data? |

Deployment constraints | Edge device? Cloud API? |

Practical guidance

Linear and logistic regression suit high-stakes, regulated domains requiring explanations (credit risk under GDPR, healthcare)

Gradient boosting excels on tabular data with complex feature interactions (advertising CTR, risk scoring)

Deep learning shines on unstructured data (images, text, audio) with sufficient scale

Start simple, measure rigorously, increase complexity only when needed

Practitioners typically compare more machine learning models via cross-validation and holdout test sets, then choose the one balancing accuracy and business constraints.

Keeping up with new architectures and tools ensures teams periodically revisit whether a more modern model might outperform legacy baselines. That’s exactly why curated weekly updates beat daily noise.

Why Machine Learning Models Matter for Modern Organizations

ML models drive revenue growth, cost reduction, and new product capabilities across industries from 2015 through 2024 and beyond.

Concrete impact examples

Personalization: Netflix recommendation models drive 75% of viewer hours

Dynamic pricing: Ride-hailing and travel platforms optimize revenue in real-time

Predictive maintenance: Manufacturing reduces downtime 50% with sensor-based models

Risk scoring: Financial institutions cut fraud losses while reducing false positives 30-50%

Content generation: Marketing teams produce more machine learning models-powered content at fraction of traditional costs

Organizations that systematically experiment with ml models, measure impact, and operationalize successful ones build defensible data and product moats.

Strategic considerations

Evaluate AI vendors based on model capabilities and deployment practices

Hire ML talent who understand the full lifecycle, not just algorithms

Invest in internal modeling where competitive differentiation matters most

Recognize that artificial intelligence success depends on data quality and operational discipline, not just model sophistication

This conceptual understanding forms the basis for making informed decisions about where to invest in statistical methods and machine learning capabilities.

FAQ

How is a machine learning model different from a traditional software program?

Traditional software relies on explicit, hand-written rules. A developer writes “if income < $30,000 and debt_ratio > 0.5, then reject loan.” The logic is deterministic and manually specified.

Machine learning models learn rules from data automatically during the training process. You provide examples of approved and rejected loans, and the model discovers patterns that predict outcomes.

In 2024 production stacks, both often coexist. Hand-written code orchestrates workflows, validates inputs, and enforces safety checks. ML models handle pattern recognition and prediction where explicit rules would be impossible to write.

Debugging differs fundamentally: for ML models, you adjust data, features, and hyperparameters rather than editing specific lines of business logic.

How many types of machine learning models are there?

There’s no fixed number. Hundreds of algorithms and countless model variants exist, with new architectures published every year in machine learning research.

Most can be grouped into families: regression algorithms, classification algorithms, tree-based ensembles, clustering, deep learning, reinforcement learning algorithms, time series, and generative models.

Practitioners typically start by trying a few well-established families rather than chasing every new research model. A data scientist might try logistic regression, random forest, and XGBoost before exploring neural networks for a given tabular problem.

How do I decide which model family to try first on a new problem?

Start from the task:

Predicting numbers (sales, prices, demand): Begin with regression models-linear regression model, then gradient boosting

Predicting labels (fraud/no fraud, churn/retain): Start with classification-logistic regression, random forest

Grouping or segmenting (customer types, document categories): Use clustering-k means clustering, hierarchical methods

Sequences and time: Time series models with lag features or LSTMs

Recommend simple, interpretable baselines first. If a linear regression algorithm or small decision tree solves the problem, you’re done. Escalate to random forests or gradient boosting when you need better performance.

Data size, feature count, and the need for explanations vs. raw accuracy should all influence decisions.

Do I always need big data and GPUs to use machine learning models?

No. Many effective models train well on modest datasets-thousands to tens of thousands of rows-using only CPUs.

Linear models, tree-based machine learning methods, and small neural networks run efficiently on standard hardware. A credit scoring model with 50,000 training examples trains in seconds on a laptop.

GPUs and very large datasets are mainly required for frontier deep learning models-large language models with billions of parameters or high-resolution image generators trained on millions of samples.

Don’t wait for web-scale data before starting. Even small, focused datasets can yield high-ROI models in real products. Many production systems run on surprisingly modest data.

How can I keep up with rapid changes in machine learning models without getting overwhelmed?

Focus on durable concepts first: model families, evaluation metrics, deployment patterns, and the ML lifecycle. These fundamentals change slowly even as specific architectures evolve.

Selectively track major new developments rather than every minor update. A new transformer architecture that achieves state-of-the-art on multiple benchmarks matters. A point release of a library usually doesn’t.

Rather than following daily noise and minor updates, professionals benefit from weekly, curated summaries of significant releases, benchmarks, and product launches.

That’s exactly the approach KeepSanity takes: one focused update per week summarizing only the most impactful AI model and tooling news across business, research, and infrastructure. No daily filler to impress sponsors. Zero ads. Just signal.